Technology R&D Projects

Technology R&D projects guide CAI2R research on better ways to gather medical image data, process information, and inform care.

The projects focus on novel image acquisition and reconstruction methods, hardware and sensors, and biophysical modeling of tissue properties.

CAI2R is pushing the field toward an imaging paradigm in which fast, intelligent scans deliver richly informative, actionable health data. Our research is guided by three Technology R&D Projects, or TR&Ds.

TR&D 1

Reimagining the Future of Scanning: Intelligent Image Acquisition, Reconstruction, and Analysis

Principal investigators: Li Feng, PhD; Riccardo Lattanzi, PhD

In this project, we are building on advances made at our Center since its founding in 2014 to develop novel means of intelligent data acquisition, MRI quantification, and image interpretation with benefits for MR scanners spanning the range of magnetic field strengths. We are also exploring the potential of longitudinal MRI data for use in such applications as MR-guided therapy and disease monitoring.

Our long-standing goals have been to move biomedical imaging toward fast, simple, universal acquisitions that yield quantitative, informative parameters. In this project, we advance our goals by developing imaging-specific machine learning methods for every step of the imaging process and to investigate new sources of meaningful health information in image data.

In particular, we proceed along three lines of investigation. First, we aim to develop new methods for rapid motion-robust quantitative MRI that combines motion-sensor-guided acquisition and model-based deep learning reconstruction for efficient estimation of quantitative parameter maps. Second, we aim to diversity our rapid MRI methods to integrate new sampling schemes tailed for new applications. Third, we are exploring longitudinal image memory (e.g., prior image information available from a given patient or subject) and aim to leverage temporal correlations among multiple imaging sessions for applications like MR-guided radiotherapy, image reconstruction, and longitudinal health monitoring.

Our core expertise in pulse-sequence design, parallel imaging, compressed sensing, model-based image reconstruction, and machine learning, allows us to question long-established assumptions about data acquisition, image formation, and even scanner design.

Prior iterations of this project have led to substantial breakthroughs in rapid, continuous, comprehensive MRI methods, in machine learning approaches to MR data acquisition and reconstruction, and in artificial intelligence (AI) algorithms for medical image analysis.

Some of our advances are already clinically available worldwide. For example, GRASP, a technique that produces clear MR images without requiring patients to hold their breath during exams, ships on Siemens MRI scanners under the name Compressed Sensing GRASP-VIBE.

CAI2R scientists were among the first to demonstrate the use of deep learning for reconstruction of MR data, and the first to show that such reconstructions are diagnostically interchangeable with traditional ones. Our scientists, through collaborations with NYU Center for Data Science, Facebook AI Research, and other research partners, are also advancing AI approaches to analysis and interpretation of medical image data.

Explore TR&D 1 on NIH RePORTER.

Note: At the time of founding of our Center, in September 2014, this project was titled “Toward Rapid Continuous Comprehensive MR Imaging: New Methods, New Paradigms, and New Applications.” In August 2019, when the NIH renewed our NCBIB mandate for a second funding period, the name was updated to “Reimagining the Future of Scanning: From Snapshots to Streaming, from Imitating the Eye to Emulating the Brain.” As put forth in our NCBIB proposal for the 2024-2029 funding period, the name has been further updated to reflect evolving project goals.

To the top ↑TR&D 2

Unshackling the Scanners of the Future: From Rigid Control to Flexible Sensor-Rich Navigation

Principal investigators: Ryan Brown, PhD; Christopher M. Collins, PhD

In this project, we are working to refine flexible coil technologies, develop novel unconventional sensors, and bring state-of-the-art simulation tools to the research community in order to facilitate evaluation of a broad range of MRI hardware, including low-field, accessible system designs.

Over the years, TR&D 2 investigations have led us away from a traditional paradigm of control and toward increasing flexibility in coil and scanner design. We are investigating new coil applications, alternative sensing capabilities, and web-based tools that facilitate in silico experimentation with new scanner configurations.

The high-impedance flexible coil arrays we introduced in 2018 have been adapted by our researchers to facilitate imaging studies that cannot be performed in conventional static postures and to improve the quality of images obtained from low-field, accessible MR systems. We are working to further develop and disseminate such coils for MR imaging at 0.55 T and lower, and for novel kinematics studies at 3 T. In addition, we are developing leading-edge workhorse coil designs for multi-nuclear MRI studies and investigations of tissue microstructure. And, looking past unimodal applications, our researchers are investigating flexible solutions for MR-guided therapy, e.g., with our new MR-Linac system.

We are also exploring ways to integrate and corelate novel sensing with MRI data. We have begun to investigate the potential of unconventional detectors—other than RF coils—to supplement traditional MRI acquisitions with complementary information in fast and cost-effective ways. One fruit of this research direction was the Pilot Tone, a device that operates just outside the imaging frequency range and enables real-time, automatically synced tracking of respiratory motion during an MRI scan. More recently, our researchers have shown that scattering perturbations in ultra-wide band antennas can detect dielectric signatures of disease—an advance that raises the possibility of answering specific clinical questions while circumventing image formation. We have also implemented MRI-compatible time-of-flight cameras to track motion of 3D surfaces in real time. In this project, we continue to explore how to further integrate and correlate novel sensing with MRI.

Across the spectrum of magnetic field strengths, innovation in imaging hardware depends on accurate, fast, and computationally-efficient numerical simulations. Members of our research team have been among some of the first to introduce such computational tools to the MR community, and we are now working to bring the next generation of simulation software to anyone with an internet connection and a web browser by developing open-source, user-friendly “virtual scanners.” We are also creating AI-powered, subject-specific simulators of temperature and specific absorption rate and exploring ways to predict the appearance of images on theorized unconventional MR systems.

Explore TR&D 2 on NIH RePORTER.

Note: At the time of founding of our Center, in September 2014, this project was titled “Radiofrequency Field Interactions with Tissue: New Tools for RF Design, Safety, and Control.” In August 2019, when the NIH renewed our NCBIB mandate for a second funding period, the name was updated in order to reflect new goals.

To the top ↑TR&D 3

Revealing Microstructure: Biophysical Modeling and Validation for Discovery and Clinical Care

Principal investigators: Els Fieremans, PhD; Dmitry Novikov, PhD

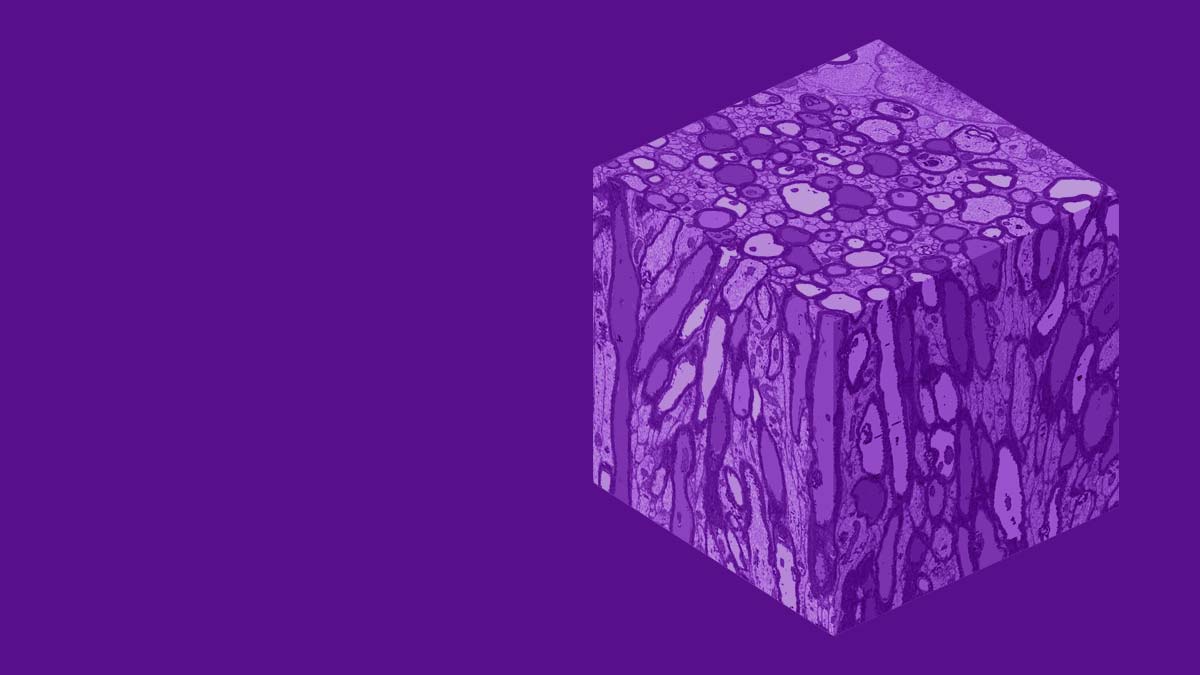

In Technology R&D Project 3, we aim at uncovering answers to the fundamental question of what MRI signal means at the cellular level. Our Center’s research in this area has resulted in key advances in both theoretical understanding and physical measurements of tissue microstructure information obtainable via MR imaging.

Because MR signal can probe water diffusion—which occurs on the length of about 1-10 micrometers, commensurate with the scale of cellular phenomena—acquisition of diffusion MR contrasts has the potential to deliver objective measurements of structures and physiological processes that lie far below MR’s image resolution limit of approximately 1 millimeter. Thus, diffusion metrics have the potential to inform qualitative MR images with quantitative maps of cellular-scale parameters, such as cell size, water fraction, membrane permeability and exchange rate.

We aim to integrate tissue microstructure mapping with comprehensive image data acquisitions in order to achieve specificity to microstructural changes, advance the understanding of physiology and pathology, detect disease at the earliest opportunity, monitor disease progression, and quantify efficacy of treatment.

Our overarching goal is to transform MRI from an imaging device into a precise scientific instrument for measuring microstructural tissue parameters.

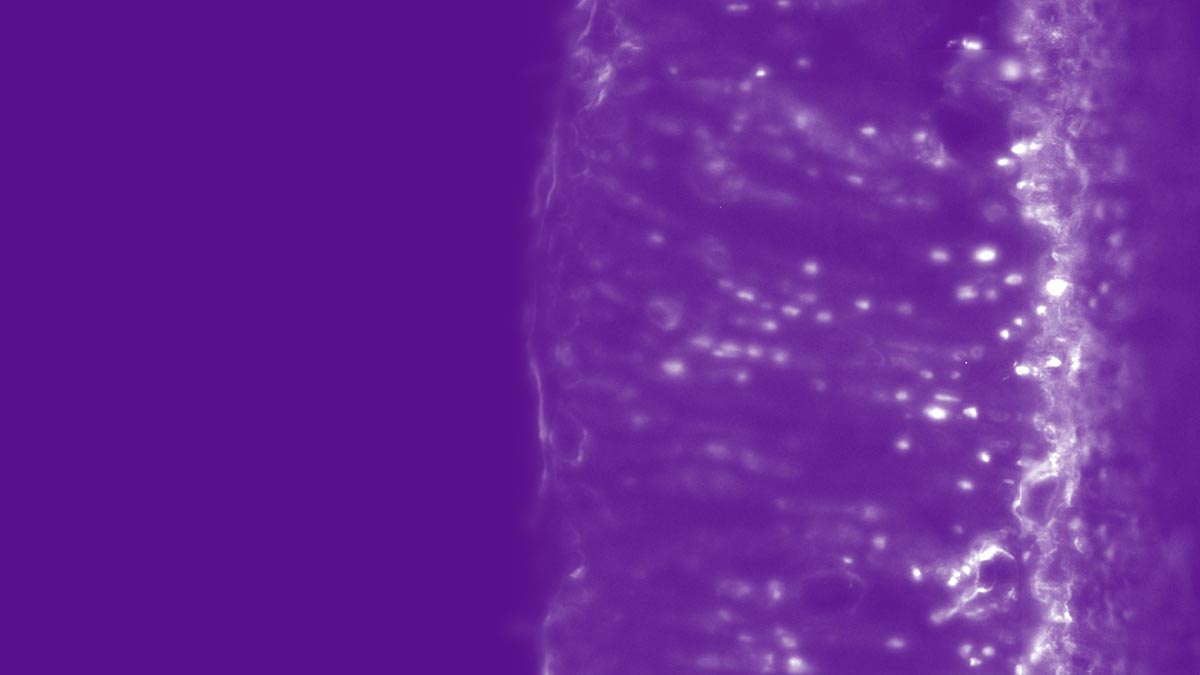

In particular, our researchers are working to extend our state-of-the-art biophysical models (e.g. by building out our “standard model” of diffusion in white matter to also include grey matter); develop microstructure-tailored MR acquisitions (e.g. by using the zero-shell imaging framework to image faster while gathering more information); and bring microstructure to clinical practice (e.g. by working with the Center’s CPs, SPs, and other TR&Ds to bring random matrix theory-based desnoising protocols and the DESIGNER preprocessing pipeline to applications in neurosurgery, neuroscience, and low-field prostate MRI).

Explore TR&D 3 on NIH RePORTER.

Note: This project launched as TR&D 4 in August 2019, when the NIH renewed our NCBIB mandate for a second funding period. The project designated as TR&D 3 in 2014-2023 has concluded, and therefore the microstructure project has become the new TR&D 3 for the 2024-2029 funding period.

To the top ↑